Show Notes

In this video, I break down how you can dominate AI search results as a B2B SaaS company.

Check out how we ranked a B2B SaaS #1 in ChatGPT: https://youtu.be/eSBmFv7jb9Q

Connect on LinkedIn: https://www.linkedin.com/in/liamdunne05/

Twitter: https://x.com/saasliam

Instagram: https://instagram.com/saasliam

Nearly half of B2B buyers now use Chat GBT, Claude or Perplexity to research vendors before ever landing on your website. And if your company is not cited in those AI answers, you may be invisible to the fastest growing segment of high intent buyers. Now, over the last couple of years, I've worked with dozens of B2B SAS companies, some of which have scaled to tens of millions in profitable ARR. And most recently, I've been working with these companies to show up in AI search engines and drive a consistent flow of leads and customers from LLMs. Just like our 30 million ARR B2B SAS client who we took from roughly 575 to over 3.5K trials from AI search alone in just 7 weeks or another B2B SAS company who we helped increase their citations by 600% and generated five paying customers in month one bearing in mind that most SEO agencies say it takes 6 months to see results. And so in this video I'm going to show you the exact system we use to dominate AI search results. explain why it's completely different to traditional SEO and then I'm going to break down in very clear steps how you can start seeing citations from AI within weeks or even days and not months. So let's dive into it. Okay, so first let me explain why AI search is not just SEO 2.0. Most people think AEO is just SEO with a fresh liquor paint and honestly this is a huge misconception. Traditional SEO we're optimizing for ranking in search result lists also known as the SER, right?

Whereas in AO or AI search optimization, it's all about being cited and ultimately being the recommended choice in AI systems like chat GBT, Claude or Perplexian. And this distinction matters because by understanding the differences in these surface areas, there are really downstream effects for how us as marketers or founders look at distribution and getting in front of our ideal customers. All we need to do is take a look at the numbers. So, one in five Google searches included an AI summary as of March 2025. 48% of B2 buyers now use AI search while evaluating vendors. And this is a 2024 HubSpot report. So, the number is probably larger by now. And there's been a projected 50% drop in traditional search by 2028 as more people shift from traditional search to these AI assistants. And ultimately what I think matters most is that visitors from AI search have been found to convert anywhere between 3 to 4x higher than traditional organic traffic. You can actually look at an AHEF report where they found that AI referred traffic converted at 20 times more than organic search traffic. But what most SAS companies and SEO agencies don't understand is that just because you're on page one or even position one on Google does not automatically translate to AEO success. There's really only a 50% overlap between AI citations and top organic listings. Nearly half or even more than half of citations are coming from completely different pages. And so what this means for smaller companies with much worse SEO, maybe even worse products and services, is that they're able to capture more share of voice in AI answers, which honestly means for the first time in like 15 to 20 years, there's this huge shift happening with regards to organic search dominance. So with that being said, let me go through four critical differences between traditional SEO and AI search. Okay, so difference number one is keywords versus prompts. So in traditional SEO, we targeted specific keywords like project management software. The whole game of SEO really was to rank in positions one to three at very minimum on page one for these keywords. Whereas in AI search, there's this long huge long tail of queries to target. Right? If you think about how users behave with these AI assistants is they're given a lot of upfront context. And that might look like, hey, I'm a marketer at a 50 person startup. if I have a 100k budget and I'm looking for project management software that integrates with Slack. And so what happens from here is the models they take this user prompt and then they conduct what's known as the query fan out which is basically breaking that initial prompt into several semantically varied queries and they might be looking for things like pricing you know alternatives integrations use cases looking for very very targeted information and so in AI search or if we look at through the lens of an AEO strategy what we're trying to do is we're really trying to capture as much of that longtail as much of those subqueries as possible and the way we do This as a first step is by mapping out what we call your prompt universe. The entire universe or the entire map of prompts that your ICP is putting into AI assistance rather than those short head terms and keywords like project management software where everyone else has been competing for the last decade.

So difference number two is we're going from a generic list of 10 blue links in the SER to personalized answers and recommendations. So for the most part, Google gives everyone the same 10 blue links regardless of what their requirements, budget or use cases are. Whereas really a unique characteristic of AI assistants is personalizations. So these AI assistants like Claude, Chat, GPT, and Proplexity, they build knowledge graphs of each user that allows them to personalize their responses. And so you could really have two users who ask the exact same question in these AI assistants and they get completely different answers and vendor recommendations based on their unique situation and constraints. And what we see happen as part of this process is these AI models sort of reasoning through the answer, thinking to themselves, okay, well, since Liam mentioned Facebook ad analytics, and since Liam only has a budget of $1,000, I'm going to prioritize vendors and information with, you know, strong social media tracking and a cheap monthly package that is going to be more relevant for Liam versus the other people asking the same question.

Difference number three is backlinks versus cross-source cooperation. So in SEO, agencies would heavily focus on getting you back links through sponsored listicles or even private blogging networks. And this was really one of the main ways to improve your page rankings as well as improve your overall domain authority. Whereas AI systems don't care about this number that you have in Semrush or a Hrefs. As proof of this, we've been able to get brand new domains, inbound qualified sales calls through an AI search strategy, which was previously impossible through SEO. You know, it would just take months to build up to that. these AI systems what they're doing is they're looking for agreement i.e. cooperation across multiple trusted sources such as G2 Quora review sites as well as places like Reddit, Wikipedia, Press and Documentation. And so when looking for this cooperation, if multiple third parties say your pricing is $99 a month, but on your website it says $79 a month, then AI is really going to trust the consensus over you. And this really extends into all areas such as features, integrations, differentiators, brand sentiment. Really think of it as this way, right? Your website is your resume where you're saying all great things about yourself and how good you are.

Whereas AI search, what it's doing is it it's calling up your references to verify all those claims. And so those claims that you're making through your content on your website can't be cooperated by third party sources, then it's really going to work against you in an AI search strategy. Difference number four is ranking pages versus passage level extraction. So in classic SEO, we were trying to rank entire pages into a determined position like position one or position three in the SER. This is not the case for AI search, right? So each piece of content from a an AEO lens could have five to 10 worthy passages i.e. blocks of text that get extracted from AI assistance. Those passages then get combined with multiple other sources of information and then an answer is generated and personalized for the user on the other end. So there is really no fixed rank. There is no fixed position one or page one. When we look at AI search, you know, like I said, two users with similar queries can receive unique answers based on the knowledge graph or based on the history of conversations that that user has had with those assistants. And so this is why content quantity actually matters now because there's less risk of cannibalization because we don't have that fixed position. Our content needs to now capture this huge longtail millions of unique queries that are now happening.

And then each piece of content can have several shots on target due to passage level extraction which is just wildly different to what we've been used to in traditional SEO. By the way, before I continue, if at any point in this video you want us to handle this entire process for you so you can start ranking in AI search and generate a consistent flow of leads without having to worry about all these technical details, then click the first link in the description and book a free call odds. Let's jump back to the video. Okay, so the first step of a solid AI search strategy is diagnosing your current AI visibility so you have a baseline to work from. Now, a huge issue is that most companies have zero idea where they stand in AI search. And what makes matters worse is they're working with SEO agencies that are reporting page one rankings and and keyword positions and they say they're looking into AI visibility, but really there's no methodology. There's nothing happening under the hood. And if they've really drawn the short straw, this agency is incorrectly telling them that SEO is the same as AO. It's all the same thing and there's nothing to worry about. And meanwhile, competitors are capturing the citations that you should be getting. And really, those competitors are influencing your company's narrative to your ideal customers in those crucial decision moments. And because less clicks are happening due to AI summarizing information for users and so really there's less reason to click through to websites, well, we need a new set of metrics to understand how we're appearing, how we're influencing decisions within these AI assistants.

And so these are the metrics we look at. We've got mention rate, which is how often your company is being mentioned in relevant answers. And we're measuring that as a percentage. We've then also got citation rate. So this is how often your information, your own information is being cited as a source in relevant answers, and we're also measuring that as a percentage. And then share voice. This is your overall dominance within relevant answers. And we measure that as a percentage. And we view these as the main leading indicators to help you understand if you're appearing in the right moments. you for those right set of high intent or category prompts that your buyers are asking. And really the goal of an AEO strategy is to improve those leading indicators because then downstream if they're improving that's how you're going to feel business impact. Okay, so here's how to think about running your first AI visibility audit. And there's really two main ways you can audit your AI visibility and I would strongly recommend you do the second one. So the first method is just to test a series of buyer intent queries across the main models such as chat, GPT, Claude and Plexity. And why you want to do it across all of the main models is because you are going to appear differently in those models. So you want to get as much coverage as possible. And so the task here is is really to see if your company is appearing in the answers and if you are then how is your company being positioned and what sources of information are being cited because that's how the narrative is being shaped. So, what you want to do is you want to take note of any competitors that are showing up. You want to take note of the types of content as well as the source domains that are appearing in those citations because these are going to be absolutely crucial when it comes to informing the rest of your AI search strategy. For example, if your competitors are being mentioned in a certain third-party publication or they're appearing in certain subreddits and you're not, well, then this is going to be somewhere to focus as part of your AI search strategy. The second far more reliable and honestly just easier way to audit your AI visibility is by using one of the many software tools in the market at the moment and we personally like to use Scrunch. So the benefit of these tools is that they're just going to do most of the work for you which is going to save you a bunch of time and most importantly in most cases they're going to test in sandboxed environments which means the answers or the insights that they give to you are just going to be far more reliable because they're not being influenced by your own workspaces memory. Because remember when we talked about that personalization piece, if you're testing in your own workspace, that's going to heavily influence the answers that you receive and possibly lead you astray. Now, for our clients at Discovered Labs, we use our own technology, our own software to do this because it allows us to go much deeper.

We're not constrained by pricing or features of other products. And the insights we get from those audits feed directly into our clients AO strategy and gives them a data advantage. Now, we found that after their first audit, most companies discover that competitors with worse SEO or even in some cases a worse product or service are dominating AI citations because those competitors have taken AI search seriously and they have a deliberate strategy. And what most companies also notice is that their brand is being misrepresented within AI answers. And how this manifests is typically outdated pricing, uh, features or information which are all being pulled from third-party sources which of course aren't incentivized to update that information or put your company in the best light possible. And worst case scenario, some companies find that competitors are literally providing information about your company to these models, essentially shaping your company's narrative to buyers in those crucial decision moments, which of course they're incentivized to do in their favor. and not yours. Now that we have an AI visibility baseline and we know how we're appearing in AI answers, the second step to an AI search strategy is creating content that's going to improve AI citations. Now, traditional SEO content is not optimal to earn AI citations. In AI search, we're dealing with probabilistic systems, which means we need more of the mathematical approach rather than a creative approach with lots of storytelling. And that's why we created our citable framework. So every piece of content we do for clients is based on this and it's honestly one of the main reasons we've been able to add tens of thousands of dollars in net new MR to clients and outperform traditional SEO agencies who keep doing things the old way. So the first letter in the citable framework is C which stands for clear entity and structure.

So in this step we're just trying to make it easy for AI models to understand the content which is going to increase the chances of it getting into the final answer. So, we found the easiest way to do this is to lead every piece of content with two to four sentence bluff bottom line up front or a TLDDR. So, as you can see in this example here, this article is a comparison, a pricing comparison specifically and we've just given the bottom line up front so that when AI agents are looking at this piece of content, they can get the full picture straight away and then they can find the relevant uh passages that they want to extract. Okay. So, the second letter is intent architecture. So intent architecture is how we structure content so that when buyers or AI asks one question, our content is already ready with the next five. And so we start with the primary query or question that we want to answer. And then throughout the content, we're covering adjacent intents like alternatives, integrations, uh varying use cases, pricing, limits, uh benchmarks. And so as part of that AI query fanout process, we're trying to satisfy as much of that as possible so that it doesn't need to go to a competitor. really staying in that real estate that we own. And so if we look at this listical we've created, it's trying to satisfy the search intent of somebody evaluating AEO agencies. And so the model is going to take this user's prompt and it's going to conduct its query fan out process to look for AEO agencies. And as part of that reasoning, it might ask itself questions like, okay, well, which of these agencies specialize in B2B SAS? Because the user uh is in B2B SAS. What agencies specialize in AEO specifically rather than just rebranded SEO? You know, what techniques and tactics or methodology do they have? What agencies have case studies of their work? Because these models want to provide the best user experience by providing trustworthy and relevant information. So, they might be looking for case studies. What are the commercial terms of these agencies? So, the model can provide um some pricing and some ranges back to the user. And so within this step, what we're trying to do is we're trying to ensure that our content captures as much of these queries, these subqueries as possible within both individual pieces of content like this, but then also additional articles in a classic hub and spoke model. Okay, so T stands for third party validation. Third party validation is how we prove our claims so these models trust us and we make it to the final answer. So we deliberately seed G2, Capter era, uh, Trust Pilot reviews as well as news and Reddit conversations with consistent uh, proof points so that when AI is checking any claims we make, checking that corporation and looking at these reputable sources, it sees the same story echoed back everywhere, not just on our website. Um, remember AI systems trust external validation more than your own claims. And so here we can see in this example piece of content, we're pulling in real reviews from trusted websites. And so any claims that we're making with regards to pricing, features, differentiators, competitors, they get supported by third party reviews, which is going to really help with that grounding process, which leads me on to the next letter, which is A and stands for answer ground. And so this is how we package pages so they read like preverified answers rather than marketing copy, which is uh tough to verify. So there's a few ways we do this. if you can open with direct 40 to 60word responses and then importantly back in those responses with cited facts and quotable stats um that link to authoritative sources. So we're giving AI everything it needs to lift a snippet to extract a passage with confidence and attribution. And so Google's own research, their agree research shows that models site better when claims are explicitly grounded. And so this is a a bit of a meta example where in our own blog where we talk about the citable framework when we talk about the step of answer grounding we're actually grounding that information in Google's agree research which obviously Google is going to be an authoritative domain and so it's going to from an AI perspective this information is going to be trusted because we're citing the original source that we've pulled this framework from.

So the next letter is B which stands for block structured rack. So these AI models use retrieval augmented generation, also known as rag, which is essentially chunking content into segments before it gets processed. And so in your content, try to create these self-contained blocks of information with clear headings, answer boxes, uh ordered lists, unordered lists, uh tables so that agents can pull this information without working too hard and having to look elsewhere. So we can see in this HubSpot blog here, they're using HTML tables here. Now, how I think how a lot of companies are getting this wrong is they're still heavily relying on visual images to use uh for comparisons and stuff like that, right? We want to put stuff in as much as possible into HTML uh content which is going to be easier for uh these agents to extract. And we can actually see some anthropic research here talking about rag and contextual retrieval where by optimizing our pages for rag, we're going to improve retrieval accuracy which is only going to benefit us downstream. So basically the TLDDR here is the easier we make it for these agents to retrieve information the more likely we are to be cited in the final answer. So the next letter is L which stands for latest and consistent. So AI systems heavily weight recency especially for things like pricing features and market positions which are constantly changing and they might not have in their training data.

And so there was actually a study where a group of people only changed the publish date on a sample set of content and it was found to improve AI search performance by up to 25%. Right? So it definitely matters. And so what we want to do here is we want to include the last updated timestamps both in page and in the schema markup so that it's easy to find. But we also must consider consistency. Right? If your website says your pricing is $99 a month, but an old TechCrunch article says it's $79 a month, then AI may skip citing you due to uncertainty, or it's just going to be feeding your ideal customers inaccurate, outdated information. So, the way we combat this is we develop what we call a set of facts. So, this is a regularly updated source of truth for information on product, pricing, and differentiators. And what we want to do is we want to propagate that to as many of the third party surface areas as possible so we have that consistency and message. And this is especially important if you're in B2B SAS where the product and really a wider ecosystem like integrations is constantly changing every quarter. And so we want to have that consistent message. And then finally in the citable framework we have a E which stands for entity graph and schema. So this is how we spell out who we are connected to in a way that both humans and machines can follow. So we want to make relationships in our actual copy within the content very explicit you know alternatives to X Y and Z integrates with ABC is a competitor to you know these three companies as well as implemented structured data in the form of schema within all of our content right schema is absolute table stakes but for clients we have seen just implementing schema to historical content uplift citations by something like 20 to 30%. Right? So this is table stakes because you know it's not going to give you an edge long term. But if you don't have it in place today, it can improve your citations. And again, just to stress it, the real value comes from making entity relationships clear in both human readable content. So your on page content as well as machine readable markup. That's how you're going to hit things from both angles here. Okay. So that's how to structure your content.

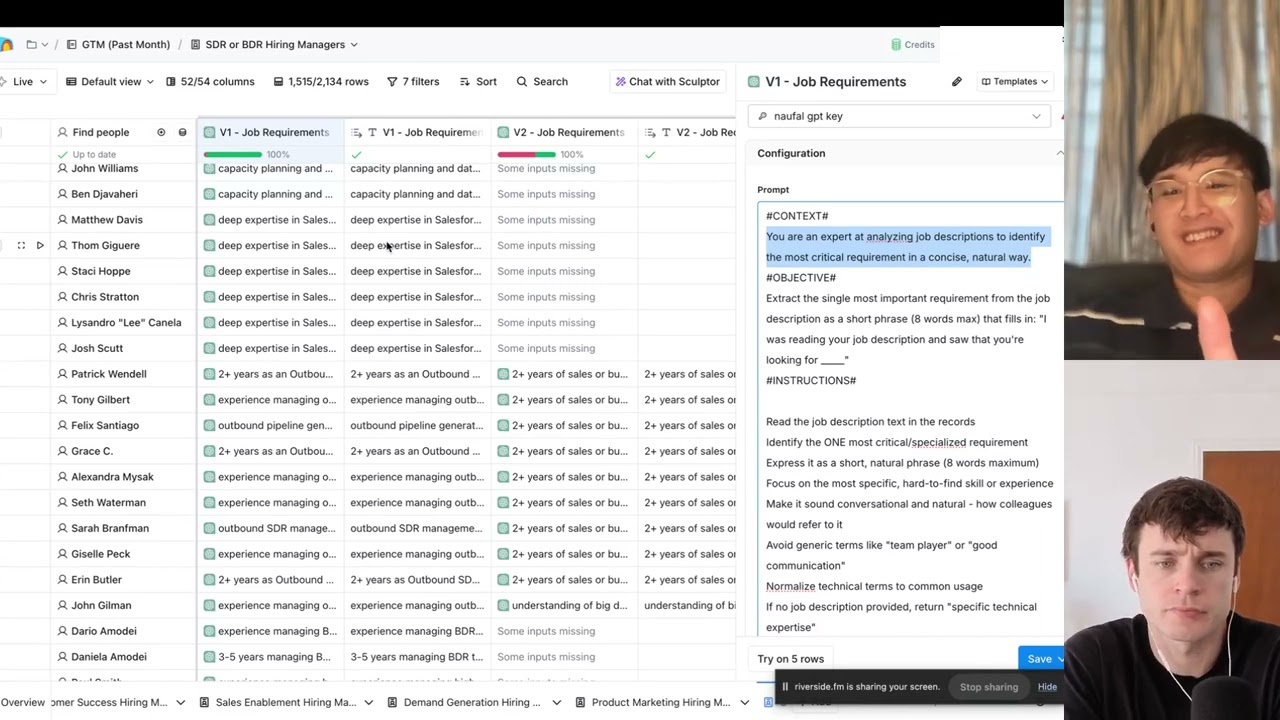

Now you have to actually do the content. And we're of the belief that daily content production for AI search optimization is non-negotiable. And I think this might be a bit of a hot take, but content quantity actually matters now in AI search. And I think this is going to have the biggest impact on traditional SEO teams and agencies who think 10 to 15 blogs per month is acceptable. Right? We're no longer trying to rank a single page in a fixed position in the SER. Right? Each piece of content that we publish could have five to 10 passage candidates, meaning many shots on target um from one piece of content. And these LLMs, they need massive amounts of content of information. and they're constantly looking for fresh information to site. And so the demand for information is now greater. I mean, if we just do some quick math on this, for each AI answer given to a user, the model might reference dozens of sources. We can see 36 sources just to answer this one question. And each one of these sources is likely to be an individual piece of content. And so if we consider that just chat GPT, not the other platforms, just chat GPT has over 800 million weekly active users. That's a lot of prompts and therefore a lot of content that is to be used as these cited sources to really shape those prompts. And if we remember that these models bias towards fresh information, there is this constant demand of new information that these models need. And so if we look at the implications of this as marketers and founders, then publishing daily content is going to give you exponentially more chances to get cited, more shots on target than a competitor who's only publishing 8 to 10 or 15 pieces of content per month. And so let me share an example of this in practice.

So we worked with a B2B SAS client whose SEO agency was publishing something like 10 to 12 articles per month. And in just month one of working together, we 5xed their content volume, which by itself isn't something to brag about. But within that time period, we also outperformed their SEO agency by 3 to 5x on all search metrics as well as business impact from search as well. And in fact, our content all ranked on page one where the SEO agency's content ranked on page 3 to four on average. And look, you don't just have to take it from me. Look at some of the most popular SAS companies that exist today. Even RAMP is publishing anywhere between four to 10 articles per day. So if anything, I don't think quantity is an issue of quality or not. Really, it's just an operational constraint that you need to try to solve for. And so how we think about balancing both quantity and quality is we use AI assisted human in the loop workflow. So we're using AI to help with efficiency, but SEO managers or experts oversee everything. So every piece of content we build is based on the citable framework. And for every single piece of content, we have tens of thousands of words of context such as research, fact check reports, source citations, link candidates, UGC, uh, YouTube videos, all being analyzed and used to help with drafting that content.

So we're very much using AI to help with that process. And then that's handed off to an expert who then goes through our editorial and QA process to ensure that it's high quality. Okay, so that's content out of the way. The third step to an AI search strategy is building a trusted off-page presence. So it's really important to understand that in the context of AI search, your website is only 20% of the battle. AI models are crawling the entire internet looking for cooperation. And so if your competitors are seeding information on these off- page places such as Reddit or they're dominating you from a reviews perspective and that's really going to hurt your AI search performance and they're going to be able to get into more of those answers. Something that we need to factor in when we're looking at off- page presence is that we're dealing with different companies here, right? Open AAI is different to Anthropic, which is different to Perplexity. They all have their own preferences and and how they site information. And so as part of a broad AO strategy, we also need to consider those different surface areas. For example, chat GBT loves Wikipedia and Reddit. You know, there's a huge commercial contracts between OpenAI and Reddit. And as part of our own internal research, we've seen that Reddit disproportionately influences ChatGpt's responses relative to any other site. So it's huge. If you want to win in ChatGpt, you need to be on Reddit. And then if we look at Google, for example, Gemini, well, any Google product like Gemini or AI overviews is obviously going to preference uh other Google products such as YouTube. And so if you want to dominate there, then having a YouTube presence is probably not a bad idea and probably not a bad idea just in general when it comes to organic search. Okay, moving on. So the final piece of a solid AI search strategy is your technical foundations.

Now, I'll be the first to say that the technical stuff is not sexy, but you really can't skip this. Okay, a human searcher may be okay with having to dig through your website, maybe waiting a few seconds for it to load. But if agents can't understand your company and navigate your website efficiently, then they're just going to bypass you and go to another source. Now, we look at the technical side through four buckets. So, the first bucket is indexibility and crawling optimization. Basically, what we're trying to solve for here is can AI easily crawl your website and is your content index? And you'd be surprised at the state of company's websites out there. We work with series A to series D companies, very immature companies, and there have been instances where 20 to 30% of their content isn't even indexed. If your content isn't indexed, then it's not going to be discovered by AI, and it's not even going to be discovered by Google. So, you're just shooting yourself in the foot. Now, the second bucket is architecture. So, this is basically the map of your website. And the most common mistakes here are duplicated content and bad URL hygiene, which again just makes it difficult for AI to know what content to prioritize. So then it can site the right content in the regenerated answer to users. The third bucket is structured data. So as I mentioned before, structured data such as page schema should be on all key pages. And this is just going to help AI understand you at a company level, but also understand individual pieces of content where they should look to extract snippets. And then finally, the fourth bucket is website performance. So the goal here is very simple. Ensure your website loads as fast as possible so that both humans and AI can access the information they need. Because again, with AI agents, this is no bueno.

They can't access your website fast enough. They're just going to go to another source of information that's making it easier for them. Okay, so with all that being said, if you're serious about winning AI search and you want a custom strategy for your company, then book a call with me personally using the link in the description. On that call, we're going to audit your AI visibility across all of the major AI platforms. Then I'm going to show you exactly where you stand today, where the gaps are, benchmark you against your competitors, and then I'll outline a road map of what we would do if we were to work together to help you get more customers from these LLMs. So again, click that link in the description and book a call to speak with me. And if you want to see a full breakdown of how we ranked a SAS company number one in chat, then watch this video